Missing Tracking

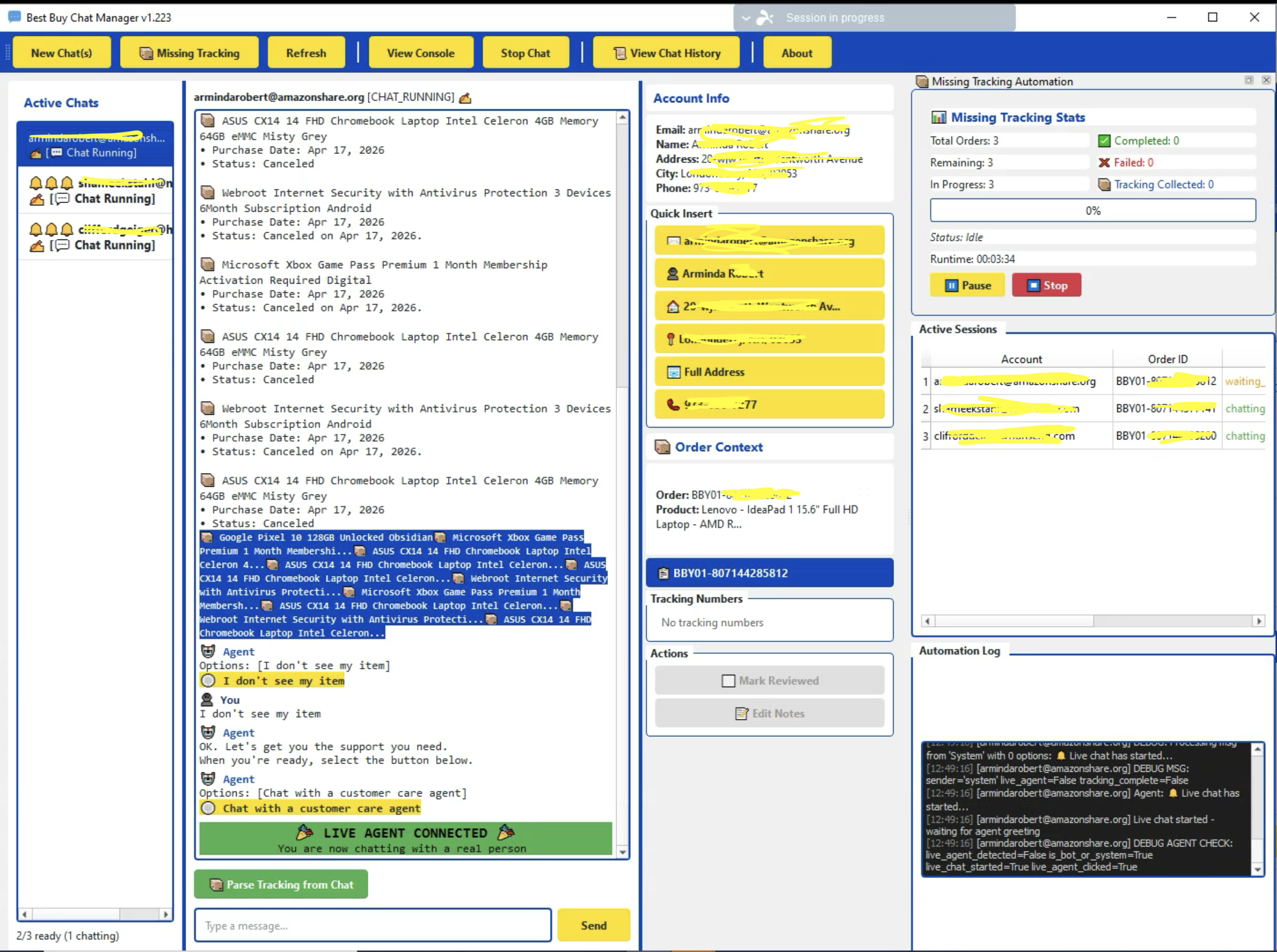

Identifies orders likely missing tracking, opens a chat, requests the missing numbers, handles verification, and writes validated tracking data back to the database — typically end-to-end with no human involvement.

A multi-session automation platform that combines browser automation, AI-assisted responses, structured logging, and a training-data feedback loop to handle customer service chats — missing tracking, refunds, replacements, exchanges, price matches, and manual review — at scale.

Client identity and proprietary details are anonymized. Architecture, capabilities, and outcomes are accurate.

High-volume e-commerce operations generate a constant stream of repetitive support conversations: missing tracking numbers, refund requests, replacements, exchanges, price matches, and general order questions. Done by hand, each one takes a person several minutes, the phrasing varies between operators, outcomes are inconsistent, and there is rarely a clean audit trail of what was said or what was decided.

As order volume grows, the support workload grows with it — and the cost of inconsistency, missed cases, and lost data compounds. The team needed a system that could conduct or assist these conversations across many sessions at once, log every interaction, and steadily improve over time.

ThinkGenius designed and built a production-grade automation platform — not a chatbot. The system drives real customer service chat sessions inside isolated browser profiles, conducts conversations through pattern-matched logic and AI-generated suggestions, extracts structured outcomes (tracking numbers, case IDs, approvals), and writes everything to a centralized database. Operators run the platform from a purpose-built desktop application that supports both fully automated and human-in-the-loop modes.

Identifies orders likely missing tracking, opens a chat, requests the missing numbers, handles verification, and writes validated tracking data back to the database — typically end-to-end with no human involvement.

Drives the conversation toward initiating a refund for missing, damaged, or undelivered items, tracking each stage from issue description through case ID collection.

Routes the conversation toward a replacement order rather than a refund, including the address confirmation and quantity steps that the replacement flow requires.

Handles product exchange flows, including the additional verification and representative navigation that distinguish exchanges from refunds and replacements.

Opens the chat, waits for a live agent, and sends a dynamically populated opening message with the order context. The operator then takes over with full conversation logging in place.

Lets a human operator conduct the chat directly through the application while the system logs every message, sender, timestamp, and outcome — feeding a structured training dataset that improves future automation.

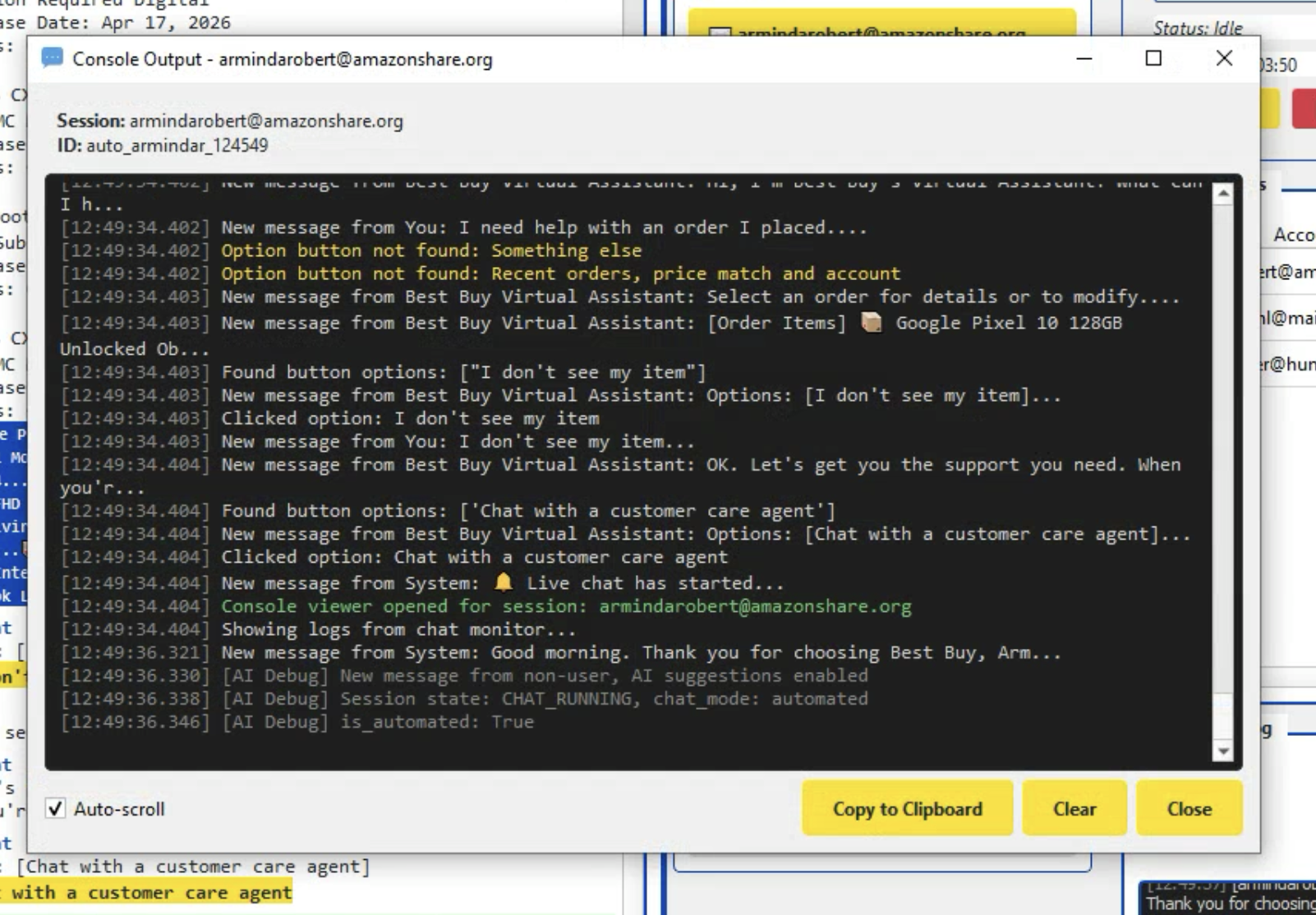

The platform is structured as a multi-process desktop application with isolated worker processes per chat session, all coordinating through a centralized database. Each layer has a single responsibility, and failures in one session never affect another.

Every subsystem reads and writes through one canonical schema, so any session can be replayed, audited, and analyzed from any machine with database access — no log files to collect, no local state to chase down.

The AI integration is grounded and specific. It is not a general chatbot — it has one job: given the current state of a support conversation, suggest the most appropriate next response, with a confidence score and reasoning.

Manual mode is not a fallback — it is a deliberate feedback mechanism. When an operator conducts a chat through the app, every message from both sides is captured along with timestamps, the chat mode, the account, the order, and the final outcome.

Over time this accumulates into a structured dataset showing how experienced operators actually handle each workflow:

This data feeds directly back into AI prompt refinement, stage-detection improvements, and the pattern library used by the automation. Each manual conversation makes the next automated conversation a little better.

The missing tracking workflow is one specific automation within the broader platform — and a good illustration of how analytical and conversational logic combine in a single pipeline.

A centralized MySQL database on AWS is the backbone of the system. Every session, message, suggestion, decision, and failure is captured in a structured schema so operators and developers always have the full picture.

One record per session with mode, account, order, message counts by sender, and a precise outcome code. Every individual message is logged separately with sender role, timestamp, and any clickable options.

Every AI suggestion is stored with its stage, confidence, and the operator's action. Explicit thumbs up/down ratings store the full conversation context for downstream quality analysis.

Collected tracking numbers are deduped per order and linked to the chat session that produced them, providing full provenance from order to conversation to recovered shipment.

Work queues are first-class tables with status tracking and unique constraints, preventing duplicate processing across machines while supporting incremental analysis.

Every failed message send is recorded with error type, retry count, and a JSON snapshot of session health, supporting precise post-mortem analysis of reliability issues.

Unhandled exceptions are captured with full stack trace, OS, Python version, and active session context — written to the database before exit so triage never depends on log file collection.

Verbose sessions capture HTML snapshots, network activity, and state transitions directly to the database, making any session fully introspectable from any developer machine.

Every session ends with a precise outcome string (tracking_collected:3, refund_approved, failed: no live agent) — the foundation for every analytics and improvement workflow.

Each analysis run records its inputs, outputs, and the highest processed session ID, enabling true incremental analysis and a historical record of automation performance.

The platform is built to run many chat sessions concurrently and to scale horizontally across machines without coordination overhead.

Modern chat widgets ship hash-suffixed CSS classes that change on every deploy. The system targets stable DOM identifiers, data-cy attributes, and structural selectors that survive widget updates.

The same chat widget routes both bot and human messages, often styled identically. Pattern matching on sender names, message structure, and explicit system events ("Live chat has started") cleanly separates the two phases.

Real agents don't follow scripts. An extensive pattern library handles verification, small talk, hold requests, inactivity prompts, and quantity questions, with AI-generated responses for anything outside the library.

Per-account Kameleo profiles provide realistic fingerprints, persistent sessions, and per-account proxy routing. Failed headless logins automatically relaunch in headed mode and return to headless on success.

Agent responses contain tracking numbers in any format — paragraphs, lists, tables. A layered approach (regex, carrier database lookup, AI parsing) reliably produces a structured list with quantities.

Eight workers against a shared queue requires careful coordination. Database-level constraints, in-progress tracking, and per-worker identity prevent duplication and keep the pool fully utilized.

All debug data and crash reports flow to the database, so a developer can introspect any session — DOM, network, state, exceptions — from any machine without shipping log files.

Tracking-collection completeness is non-trivial: agents may answer in multiple messages, provide fewer than expected, or include extras. A settling delay plus AI-assisted verification determines the true end state.

This is not a brittle script that breaks on the first unexpected agent response — it is an engineered system with well-defined states, structured logging, AI assistance, training pipelines, and continuous-improvement tooling.

The architecture — isolated workers driving real interfaces, AI used surgically with full feedback capture, a single canonical database, and a desktop app built around how operators actually work — applies anywhere repetitive human workflows happen inside web interfaces. Customer service is one expression of it; claims processing, account remediation, and marketplace operations are others.

ThinkGenius builds practical automation platforms that combine browser automation, AI, database engineering, and operational tooling to solve real business problems.